In 1877 Arthur Conan Doyle was sitting in one of Dr Joseph Bell’s outpatient clinics in Edinburgh as a medical student, when a lady came in with a child, carrying a small coat. Dr Bell asked her how the crossing of the Firth of Forth had been on the ferry that morning. Looking sightly askance she replied;

“Fine thank you sir.”

He then went on to ask what she had done with her younger child who came with her.

Looking more astonished she said:

“I left him with my aunt who lives in Edinburgh.

Bell goes on to ask if she walked through the Botanic Gardens on the way to his clinic and if she still worked in the Linoleum factory and to both these questions she answered in the affirmative.

Turning to the students he explained

“I could tell from her accent that she came from across the Firth of Forth and the only way across is by the ferry. You noticed that she was carrying a coat which was obviously too small for the child she had with her, which suggested she had another younger child and had left him somewhere. The only place when you see the red mud that she has on her boots is in the Botanic Gardens and the skin rash on her hands is typical of workers in the Linoleum factory.

It was this study of the diagnostic methods of Dr Joseph Bell led Conan Doyle to create the character of Sherlock Holmes.

A hundred years later and I was young doctor. In 1977 there were no CT or MRI scanners. We were taught the importance of taking a detailed history and examination. Including the social history. We would recognise the RAF tie and the silver (silk producing) caterpillar badge on the lapel of a patient jacket. We would ask him when he joined the caterpillar club and how many times he had had to bail out of his plane when he was shot down during the war – a life saved by a silk parachute. We would notice the North Devon accent in a lady and ask when she moved to Oxford.

The patient’s history gave 70% of the diagnosis, examination another 20% and investigation the final 10%. Patients came with symptoms and the doctor made a presumptive diagnosis – often correct - which was confirmed by the investigations. Screening for disease in patients with no symptoms was in its infancy and diseases were diagnosed by talking to the patients and eliciting a clear history and doing a meticulous examination. No longer is that the case.

At the close of my career, as a renal cancer surgeon, most people came in with a diagnosis already made on the basis of a CT scan, and often small kidney cancers were picked up incidentally with no symptoms. The time spent talking to patients was reduced. On one hand it means more patients can be seen but on the other the personal contact and empathy can be lost.

Patients lying in in bed have sometimes been ignored. The consultant and the team standing around the foot of the patient’s bed discussing their cases amongst themselves. Or, once off the ward, speaking of the thyroid cancer in bed three or the colon cancer in bed two. Yet patients are people too with histories behind them and woe betide the medic, or indeed the government, who forgets that.

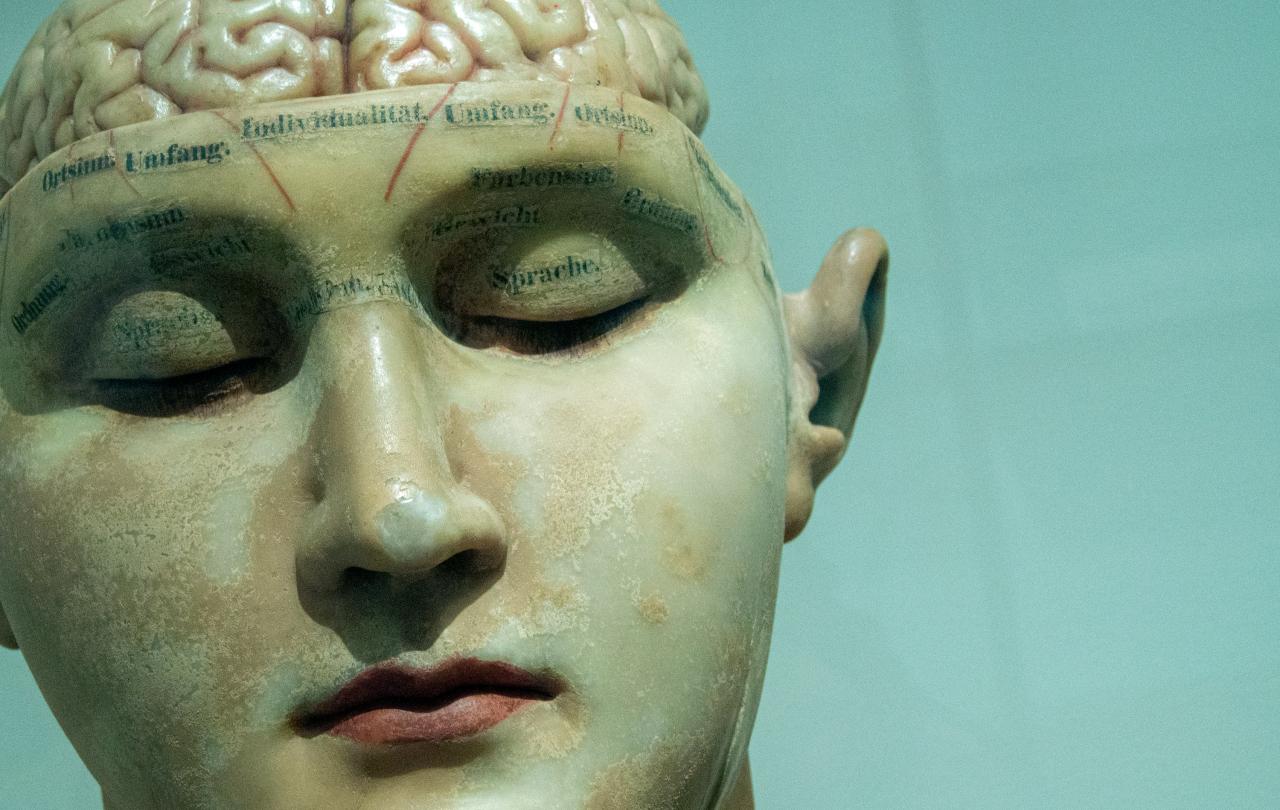

With computer aided diagnosis, electronic patient records and more sophisticated investigation the patient can easily become even more remote. An object rather than a person.

We speak today of more personalised medicine with every person having tailored treatment of the basis of whole genome sequencing and knowing each individual’s make up. But we need to be sure that this does not lead to less personalised medicine by forgetting the whole person, body mind and spirit.

Post Covid, more consultations are done online or over the telephone -often with a doctor you do not know and have never met. Technology has tended to increase the distance between the doctor and patient. The mechanisation of scientific medicine is here to stay, but the patient may well feel that the doctor is more interested in her disease than in herself as a person. History taking and examination is less important in terms of diagnosis and remote medicine means that personal contact including examination and touch are removed.

Touching has always been an important part of healing. Sir Peter Medawar, who won the Nobel prize for medicine sums it up well. He asks:

‘What did doctors do with those many infections whose progress was rapid and whose outcome was usually lethal?

He replies:

'For one thing, they practised a little magic, dancing around the bedside, making smoke, chanting incomprehensibilities and touching the patient everywhere.? This touching was the real professional secret, never acknowledged as the central essential skill.'

Touch has been rated as the oldest and most effective act of healing.

Touch can reduce pain, anxiety, and depression, and there are occasions when one can communicate far more through touch than in words, for there are times when no words are good enough or holy enough to minister to someone’s pain.

Yet today touching any patient without clear permission can make people ill at ease and mistrustful and risk justified accusation. It is a tightrope many have to walk very carefully. In an age of whole-person care it is imperative that the right balance be struck. There’s an ancient story that illustrates the power of that human connection in the healing process.

When a leper approached Jesus in desperation, Jesus did not simply offer a healing word from safe distance. he stretched out his hand and touched him. He felt deeply for lepers cut off from all human contact. He touched the untouchables.

William Osler a Canadian physician who was one of the founding fathers of the Johns Hopkins Hospital in Baltimore, and ended up as Regius Professor of Medicine in Oxford, said:

“It is more important to know about the patient who has the disease than the disease that has the patient”.

For all the advantages modern medicine has to offer, it is vital to find ways to retain that personal element of medicine. Patients are people too.